[ad_1]

Android phones have become much more user-friendly over the years, but sometimes we need a little help in certain areas. Whether it’s magnifying the screen to help you read easier or using Live Caption to take notes from a meeting, accessibility features show people that their phones will work for them regardless of any disability they may have. In honor of Global Accessibility Awareness Day, Google is introducing several features to make the Android experience even more inclusive.

Google’s new slew of accessibility features includes improvements to the Live Caption feature, getting AI to help create alt text, and providing more information about which locations are wheelchair-accessible.

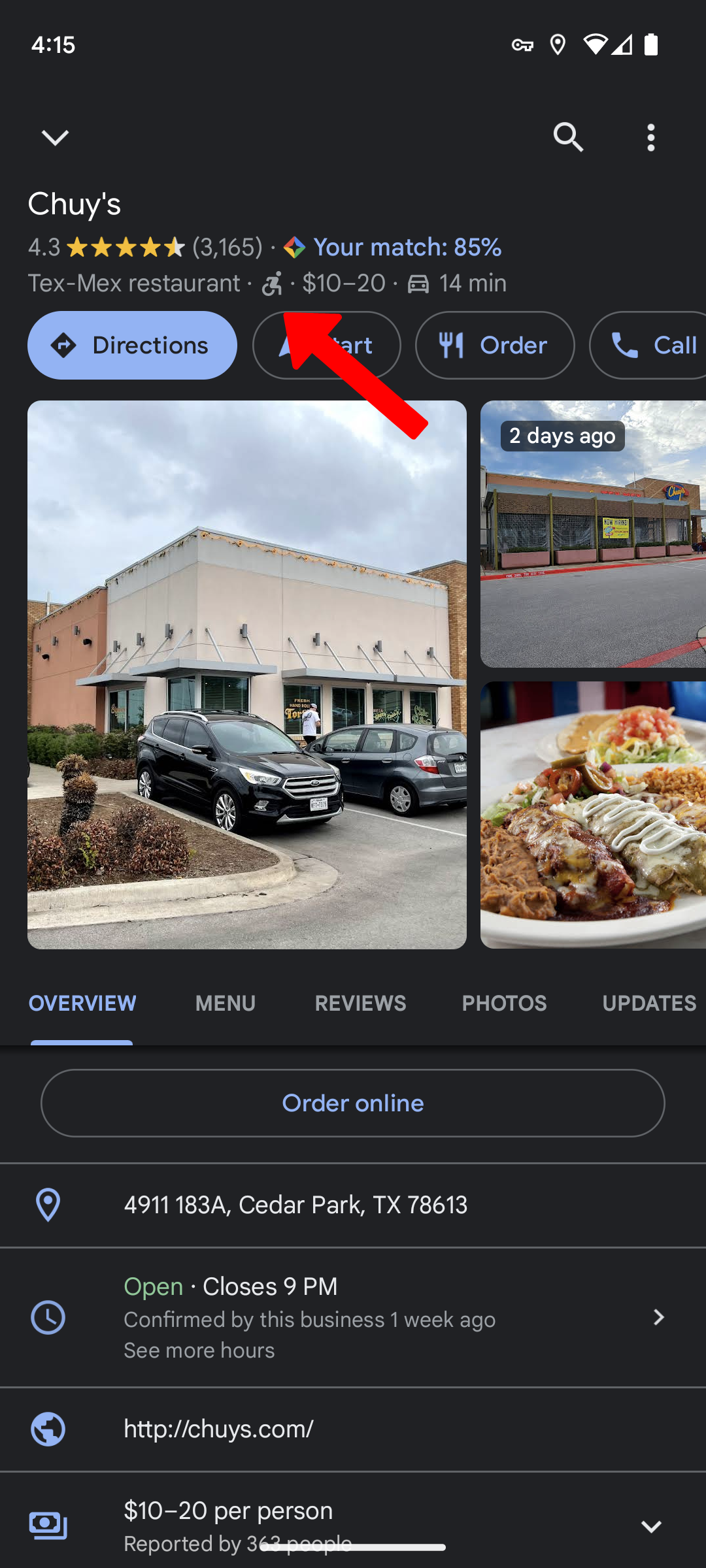

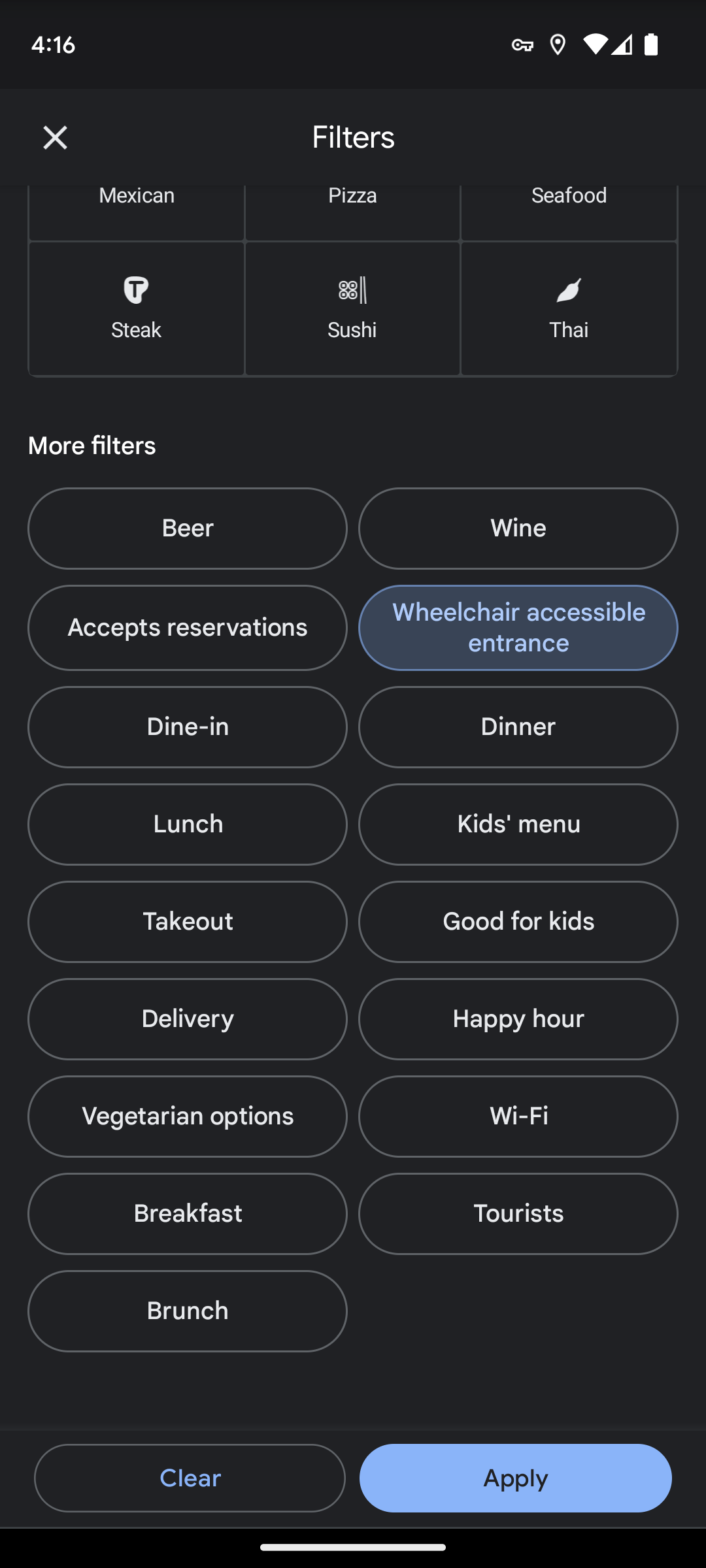

As of 2020, people were able to use the Accessible Places feature on Google Maps to let them know if a place had ramps set up for wheelchairs or strollers. While it was useful, hiding these features behind an opt-in didn’t make it as accessible as Google might have thought. To fix that, the company removed the obligation to sign up, and now anyone can check if a location has an alternative to stairs for those who can’t use them.

On the web browsing side of things, Google Chrome will be able to detect typos when writing in a URL, which can greatly help people with dyslexia and other learning disabilities as well as people who are simply learning a new language. This feature is already available on desktop, but its arrival on mobile might take a few more months. Still, mobile users are getting a TalkBack update on Chrome for Android to help them better manage their tabs.

The improvements coming to Live Caption aren’t major, but there’s one critical element that will help people better communicate. On a phone call, users will be able to type their responses into the feature and have it read the message to the other person. It’s currently only available on Pixel 6 and 7 devices, but it will eventually land on older models as well as other Android phones.

As one might expect, Google’s focus on inclusion will include some assistance from AI. It wants to help those who have trouble seeing know what kind of images they’re looking at by integrating AI into the creation process of alt text and captions. It notes that many images either have low-quality alt text or captions or simply don’t have any at all. To fix that, it will begin using AI to start generating these blocks of text in detail so everyone can understand what they’re looking at on their devices.

Finally, Google teased more accessibility features coming to Wear OS 4, but details will only come later this year.

Not every disability is visible and not everyone who uses accessibility features has a disability, so it’s essential that Google keeps working on bringing more features to life. With Android 14 on the way, it could take another step at making the experience more inclusive by tweaking its design to be more legible when using accessibility features.

[ad_2]

Source link